Immersion vs Illusion: Why Atmos Binaural Isn’t What You Think It Is

Spatial audio is powerful, but only when it’s real: this is a mastering engineer’s take on psychoacoustics, Atmos, and the rise of headphone-based trickery

I don’t work in Atmos.

Not because I’m against it. I remember sitting in an Atmos room at AES NY 2019 and feeling goosebumps. I believe spatial audio has extraordinary creative potential. But to do it properly requires a full-range, certified monitoring environment — a real room with real translation. And I’m not going to fake it.

I monitor on ATC SCM45A Pros, one of the most revealing stereo speakers available. I trust them with the final 5%. To build a Dolby-certified Atmos room that maintains that level of resolution would take tens of thousands of dollars in speakers alone, and a precise room geometry most spaces can’t realistically accommodate. So I don’t offer Atmos. Not because I don’t care but because I care too much.

That’s the genesis of this post. Because increasingly, I see engineers claiming Atmos expertise based on headphones alone. AirPods Max. Spatial audio plugins. A few ceiling-mounted KRKs in an untreated home studio and a vague sense of object placement. But spatial perception doesn’t work like that.

You can’t call your room a Dolby Atmos studio unless it meets Dolby’s certified requirements. These include:

A minimum 7.1.4 speaker layout

Speakers placed within strict tolerances for height, angle, and distance

Full-range monitoring (ideally down to 40 Hz)

Calibrated EQ and delay alignment

Approved room correction

A certified renderer and monitoring control path

If you’re using basic consumer monitors, ceiling rigs held up with shelf brackets, or worse — folding everything down to headphones and calling it immersive — you’re not working in Atmos. You’re working in simulation. And for mastering, that distinction matters more than most people realize.

How We Actually Hear in Space

To understand why Atmos binaural is a compromise, you need to know how humans localize sound. Our ears aren’t just inputs. They’re a directional processing system, evolved over millennia.

There are three main ways we perceive spatial origin:

1. Interaural Time Differences (ITDs)

At low frequencies (under ~800 Hz), the brain compares when a sound arrives at each ear. A 0.5ms delay can signal a sound’s horizontal position. This is incredibly precise: people can distinguish left/right source changes as small as 1°.

2. Interaural Level Differences (ILDs)

At high frequencies (above ~1600 Hz), sound doesn’t wrap around the head as easily. One ear gets a louder signal than the other. That difference in loudness gives the brain more cues about direction.

3. Spectral Cues from the Pinna

For vertical localization — whether a sound is above, below, or behind — we rely on subtle frequency filtering from the outer ear. These filters are highly individualized. Your own ear shape determines which resonances are emphasized at which angles.

A real surround system stimulates all of these systems together, in natural proportion. Headphones… don’t.

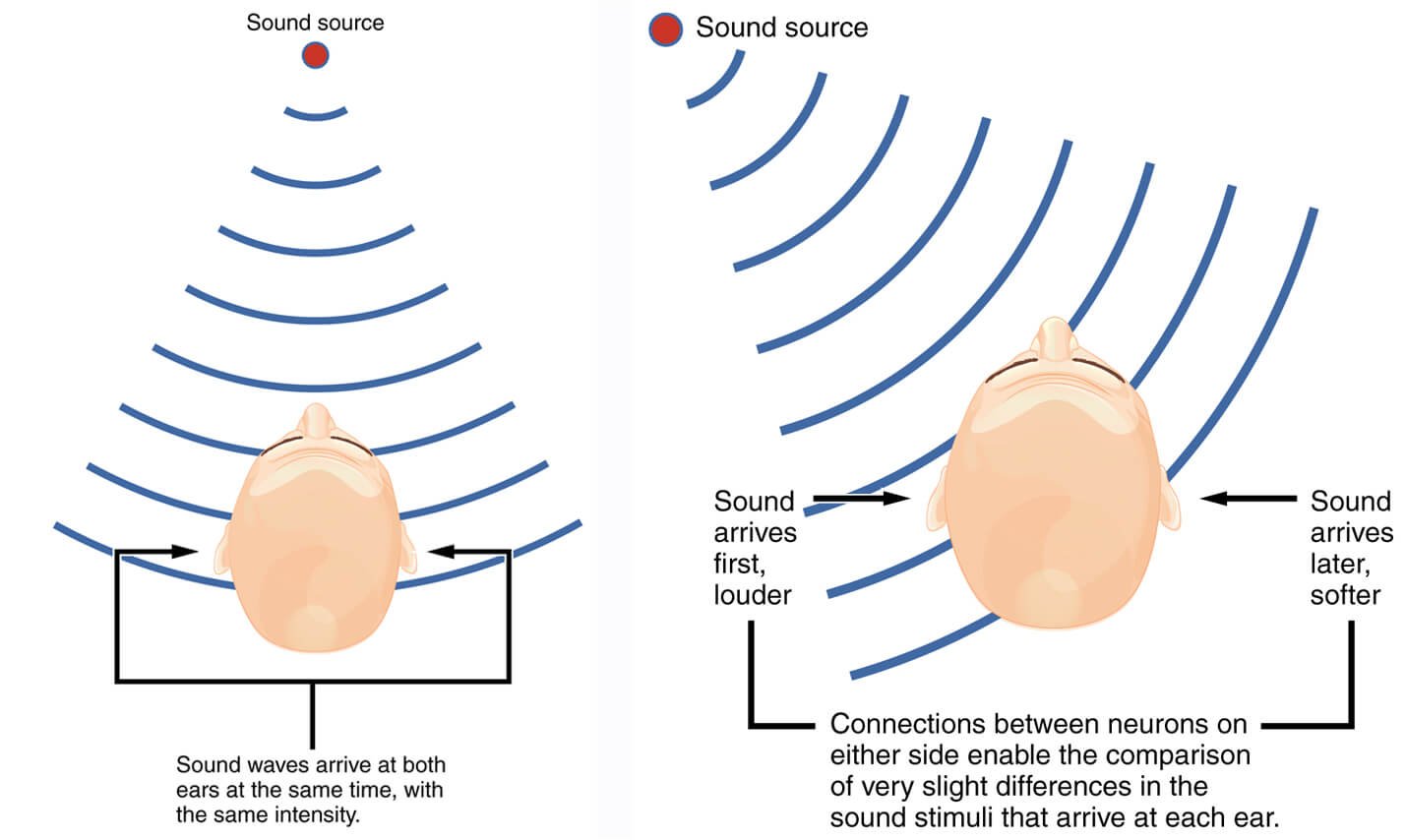

How the brain localizes sound: when a source is centered, sound reaches both ears simultaneously (left); when off-center, one ear hears it slightly earlier and louder (right).

Why Atmos Binaural Is a Compromise

When you fold an Atmos mix down into binaural, you’re no longer listening to true spatial information. You’re listening to a stereo interpretation created using:

Generic HRTFs (head-related transfer functions)

Simulated delay and filtering

Artificial panning models

That means the precise differences your brain needs to localize sound are now approximated. And because HRTFs vary so widely between individuals, what sounds “immersive” to one person might sound smeared or confusing to another.

Without a personalized HRTF, Atmos binaural is psychoacoustic trickery. Not surround. Not immersive. Just a clever illusion.

You’ll hear exaggerated wideness, soft phantom reverb tails, and vocal imaging that feels “ghosted” — not because it was placed there, but because it’s being interpreted that way.

And for mastering, where detail, balance, and translation are everything, that simply doesn’t cut it.

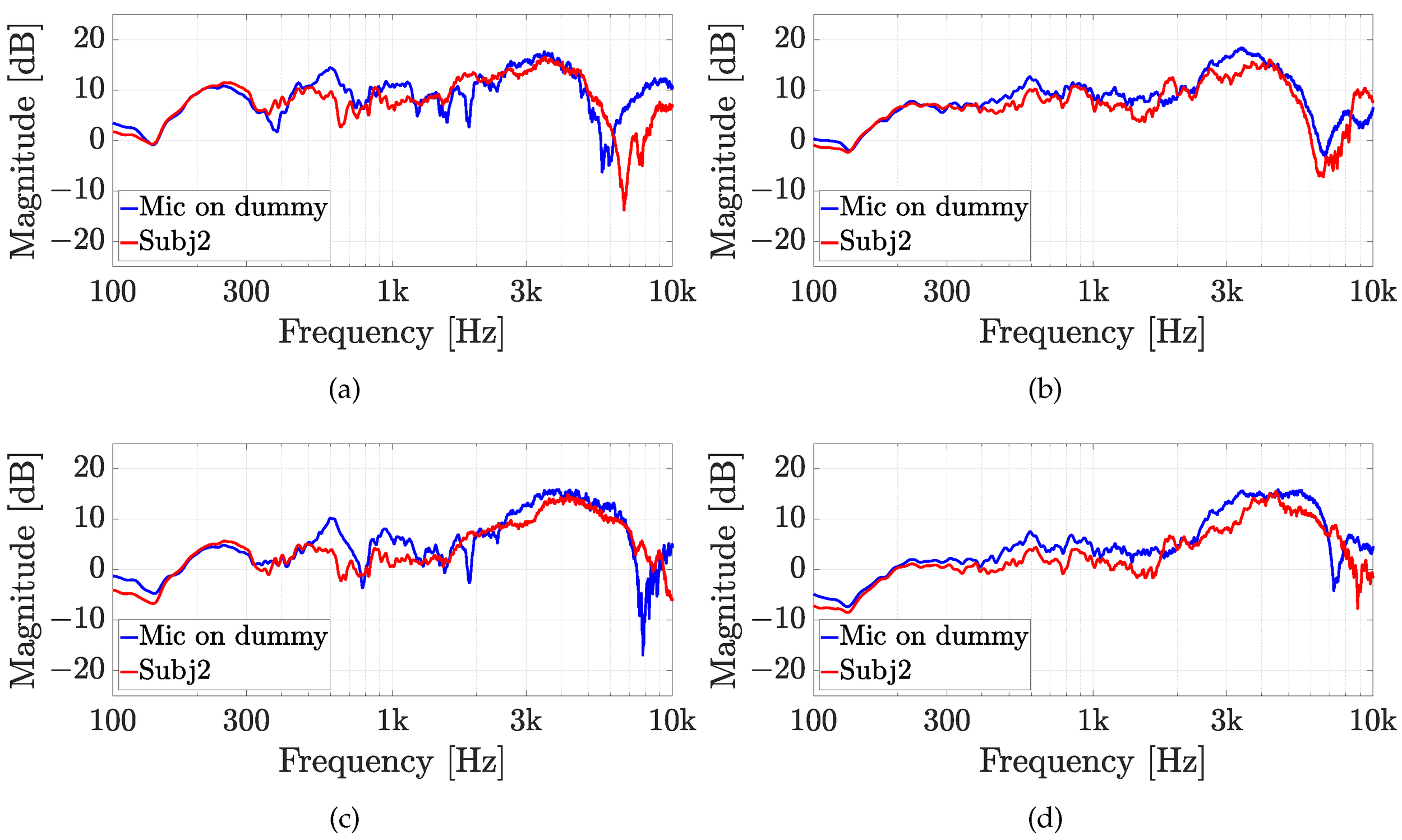

Spectral mismatches between HRTFs of a dummy head and a human subject. This variation means generic binaural rendering will never sound spatially accurate for everyone.

The Dangers of Mixing into Illusion

The bigger issue isn’t that binaural is a compromise. It’s that some people are mixing (or worse, mastering) based on that compromise.

If you’re only listening on AirPods Max, you’re not hearing the real room mix. You’re hearing Dolby’s idea of what someone’s head might sound like. The results are often disembodied, midrange-challenged, and difficult to translate back into stereo or speakers.

I’ve had artists come to me with Atmos bounces that sounded massive in headphones, yet collapsed on stereo monitors. And I’ve heard stereo mixes that felt grounded and focused suddenly bloom unnaturally when rendered in Atmos binaural — proof that the fold-down can significantly reshape perceived intimacy, not always for the better. Without access to the full monitoring environment, you’re flying blind.

What I Do Instead

I work in stereo. On Audeze LCD-X headphones and my ATCs, because I know what I’m hearing. The illusion isn’t necessary. I can tell you exactly what’s in the mix, what’s working, and what might not translate across systems.

And all of my musical memories — the moments I laughed, cried, danced — were experienced in stereo. Hearing Pet Sounds in Atmos feels completely wrong to me when the stereo mix is so integral to the music.

My mastering studio in Redmond, on the Eastside of Seattle, WA.

I’m not anti-Atmos. But I’m deeply skeptical of any process that fakes immersion while calling it real. Especially when artists are paying for it. If you want to do Atmos, do it right. Hire someone with a certified room, who knows what they’re doing, and can show you how it actually sounds in space.

If you want clarity, depth, emotional presence, and precise finalization, you don’t need to simulate surround. You need someone who can hear.

PS. Here’s an interesting fact: the first commercially released stereo LPs hit the market in 1958. By 1969, they didn’t even release a mono version of Abbey Road — the format was dead within the decade.

In an era where technologies move faster, not slower, Dolby Atmos debuted in 2012 and has been used in music since 2017. As of 2026, I can still count my Atmos enquiries on one hand — and so can most engineers I know.